RAIVE – Responsible AI Validation Engine

Assessing and optimizing AI-assisted processing, data processes as well as governance and ethics

Artificial intelligence (AI) is providing the basis for numerous new business models and use cases. At the same time, using AI also entails hazards and risks. Incorrect decisions that result from improperly trained analytical models can potentially result not only in additional costs, but also in reputational damage. Such risks are particularly acute if test data contains a previously undetected bias or if the principle of dual control is not observed. Furthermore, there is also an increase in legal risks due to the increased regulatory requirements for the use of AI algorithms.

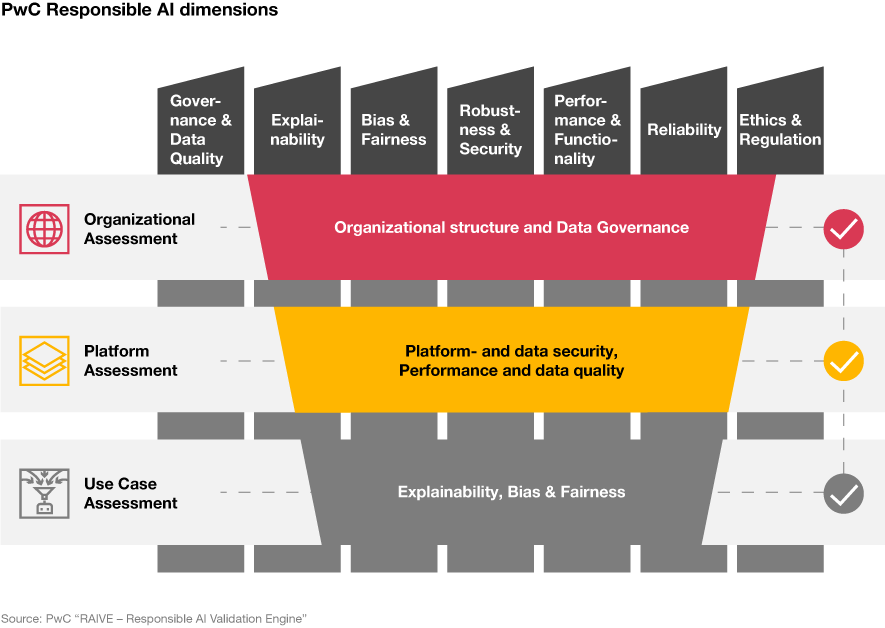

RAIVE offers a structured and established procedure for assessing your approach to AI algorithms and takes account of:

- the level of the company as a whole

- individual platforms

- specific application cases

RAIVE makes it possible for the results of analytical models to be optimized with a view to their security and explainability. You will ultimately receive a “responsible AI readiness” rating as part of a maturity assessment and be given specific items for action aimed at increasing your level of maturity.

"Ensuring adherence to regulatory requirements is one of the challenges I see our clients face on a daily basis. This is where RAIVE comes in to provide assistance."

RAIVE in a nutshell

RAIVE in a nutshell

Our solutions

Self-assessment

Prior to the actual assessment by PwC, our self-assessment will give you an initial impression of the maturity level of your organization, business processes and platforms as well as the implementation of your AI use cases. The self-assessment takes only a few minutes.

Immediately rate your responsible AI maturity level:

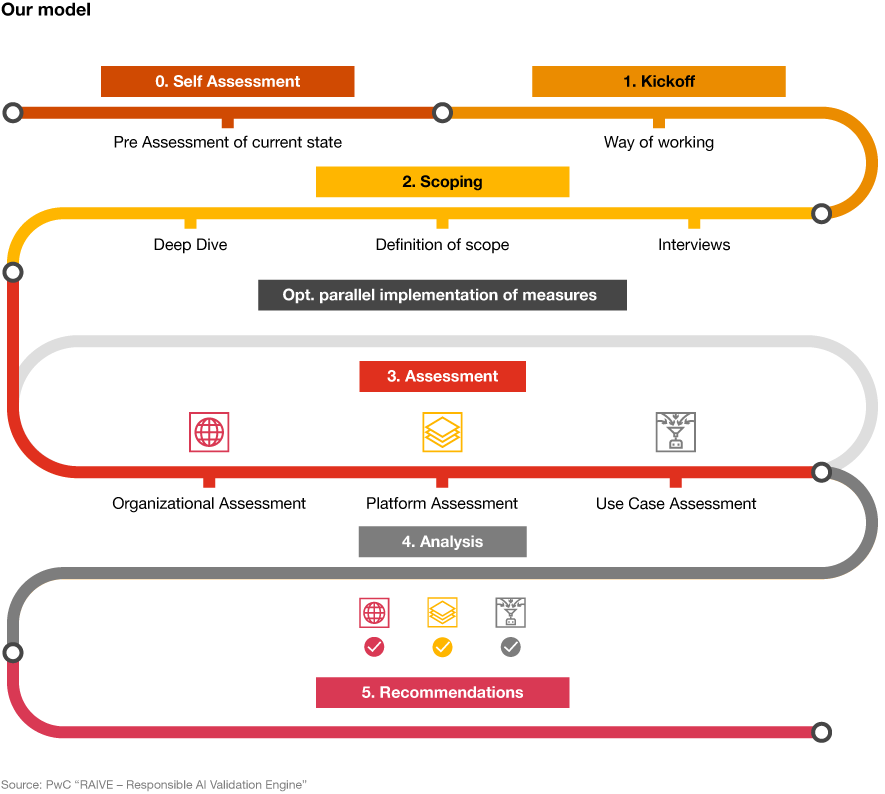

Assessment

The assessment by PwC is tailored specifically to your needs and consists of five phases. Following a kick-off meeting, in which we clarify the general conditions, scoping takes place, meaning that you can flexibly select the modularly structured assessment components on which you would like to focus. Here, we will make sure that all dependent topic areas are taken into consideration as well. Finally, you will be provided with a pinpoint classification of your maturity level, specific measures to be carried out and – if desired – support with implementation.

Implementation

We will be pleased to assist you with the implementation of the measures identified. This can encompass, depending on the scope of the assessment, the optimization of your organizational and governance processes as well as the fine-tuning of the AI processes used.

The benefits for you

- Standardized and structured procedure

- Audit-proof

- Avoiding reputational damage and legal consequences

- More transparency

Standardized and structured procedure

RAIVE provides companies with a sophisticated and tested framework for assessing existing processes or planning the use of AI in a future-proof manner. In this context, PwC doesn’t merely determine the availability of technology; rather, it assesses existing processes, use cases and platforms in detail.

Audit-proof

RAIVE has been developed based on a PwC best practice policy for responsible AI. Our audit colleagues can attest to its efficacy if they are involved at subsequent stages. Simply ask and we will be pleased to put you in contact with them.

Avoiding reputational damage and legal consequences

With RAIVE, it is possible to detect organizational gaps at the level of technology. Potential negative consequences, such as reputational damage or even chain reactions with farther-reaching legal ramifications, can thus be prevented.

More transparency

Problems not previously identified are transparently presented under the headings of “organization”, “platforms” and “use cases”. With specific action items, you will be able to draw up a roadmap for implementation.

"Artificial intelligence is currently on everyone’s lips. However, in order for it to be used operationally, it is of the utmost importance that models be continuously monitored and that the explainability of results is guaranteed."